To this end, this paper concentrates on FrodoKEM, a key encapsulation mechanism submitted to NIST as a potential post-quantum standard. Thus, it is important, during the scrutiny of the candidates, to explore the potential of implementing these algorithms on a variety of platforms and to assess the overhead of adding countermeasures. NIST have also stated that algorithms which can be made robust against physical attacks in an effective and efficient way will be preferred. The reason for such a large number of candidates is because lattice-based algorithms are extremely promising: they can be implemented efficiently and they are extremely versatile, allowing to efficiently implement cryptographic primitives such as digital signatures, key encapsulation, and identity-based encryption.Īs in the past case for standardising AES and SHA-3, the parameters which will be used for selection include the security of the algorithm and its efficiency when implemented in hardware and software.

Lattice-based cryptographic algorithms are a class of algorithms which base their security on the hardness of problems such as finding the shortest non-zero vector in a lattice. The contest started at the end of 2017 and is expected to run for 5–7 years.Īpproximately seventy algorithms were submitted to the standardisation process, with the large majority of them being based on the hardness of lattice problems. The most notable example of these activities is the open contest that NIST is running for the selection of the next public-key standardised algorithms. This effort is supported by governmental and standardisation agencies, which are pushing for new and quantum resistant algorithms. To promptly react to the threat, the scientific community started to study, propose, and implement public-key algorithms, to be deployed on classical computers, but based on problems computationally difficult to solve also using a quantum or classical computer. These problems, however, will be solved in polynomial time by a machine capable of executing Shor’s algorithm .

The security of our current public-key infrastructure is based on the computational hardness of the integer factorisation problem (RSA) and the discrete logarithm problem (ECC). Certain fields, such as biology and physics, would certainly benefit from this “quantum speed up” however, this could be disastrous for security. The future development of a scalable quantum computer will allow us to solve, in polynomial time, several problems which are considered intractable for classical computers. Overall, we significantly increase the throughput of FrodoKEM for encapsulation we see a \(16\times \) speed-up, achieving 825 operations per second, and for decapsulation we see a \(14\times \) speed-up, achieving 763 operations per second, compared to the previous state of the art, whilst also maintaining a similar FPGA area footprint of less than 2000 slices. The parallelisations proposed also complement the addition of first-order masking to the decapsulation module. This process is eased by the use of Trivium due to its higher throughput and lower area consumption. This research proposes optimised designs for FrodoKEM, concentrating on high throughput by parallelising the matrix multiplication operations within the cryptographic scheme.

#Hardware id trivium keygen generator iso

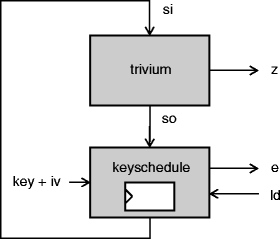

Trivium is a lightweight, ISO standard stream cipher which performs well in hardware and has been used in previous hardware designs for lattice-based cryptography. However, for many of the candidates, this module is a significant implementation bottleneck. seed-expanding), and as such most candidates utilise SHAKE, an XOF defined in the SHA-3 standard. A condition for these candidates is to use NIST standards for sources of randomness (i.e. FrodoKEM is a lattice-based key encapsulation mechanism, currently a semi-finalist in NIST’s post-quantum standardisation effort.